Artificial Intelligence has quickly become a boardroom priority. Enterprises are investing heavily in AI-powered products, internal automation, and data-driven decision-making — all in pursuit of efficiency, innovation, and competitive advantage.

But there’s a problem most leaders don’t talk about openly:

The majority of enterprise AI projects fail.

Depending on the source and methodology, estimates suggest that between 80% and 95% of AI initiatives never reach production or fail to deliver measurable business value.

More recent research confirms the pattern:

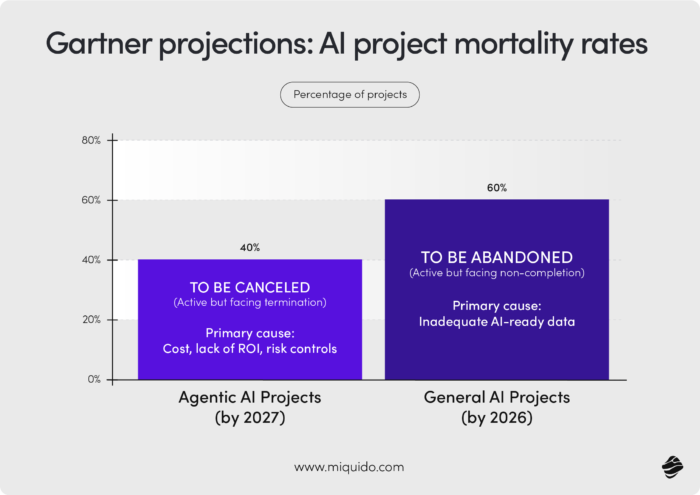

- Gartner predicts that over 40% of agentic AI projects will be canceled by 2027, primarily due to escalating costs, unclear business value, and inadequate risk controls.

- The same firm estimates that up to 60% of AI projects will be abandoned due to a lack of AI-ready data.

- According to McKinsey’s State of AI 2025 report, while 88% of organizations now use AI in at least one function, only about one-third are scaling it, and just ~6% achieve significant EBIT impact.

This gap between adoption and impact is where most companies get stuck.

I call it pilot purgatory — a place where organizations run experiments, build prototypes, and showcase innovation… but fail to translate any of it into meaningful business outcomes.

The good news? These failures are not random. They follow clear, repeatable patterns.

And once you understand them, you can avoid them.

From this article you will learn:,

- Why most enterprise AI projects fail despite massive investment and executive support

- The real reasons behind poor outcomes — from weak data foundations to misaligned strategy

- How “shiny object syndrome” and starting with technology instead of business problems derail AI initiatives

- What makes AI different from traditional software — and why probabilistic systems require new thinking

- Why 90% accuracy is often not enough, especially in high-stakes enterprise environments

- The most common traps, including the fine-tuning trap and poorly implemented RAG systems

- How to evaluate whether a feature truly needs AI or should rely on simpler, rule-based logic

- Proven ways to avoid failure, including starting small, validating early, and scaling what works

- What successful enterprise AI adoption examples have in common

- How to align AI initiatives with real business impact, not just usage or hype

- Why data, not models, is your biggest competitive advantage in the age of AI

Top 7 reasons enterprise AI projects fail

After leading AI initiatives across fintech, healthcare, eCommerce, and beyond, I’ve seen the same root causes emerge again and again. Importantly, AI rarely fails because of the model itself.

It fails because of decisions made before the first line of code is written.

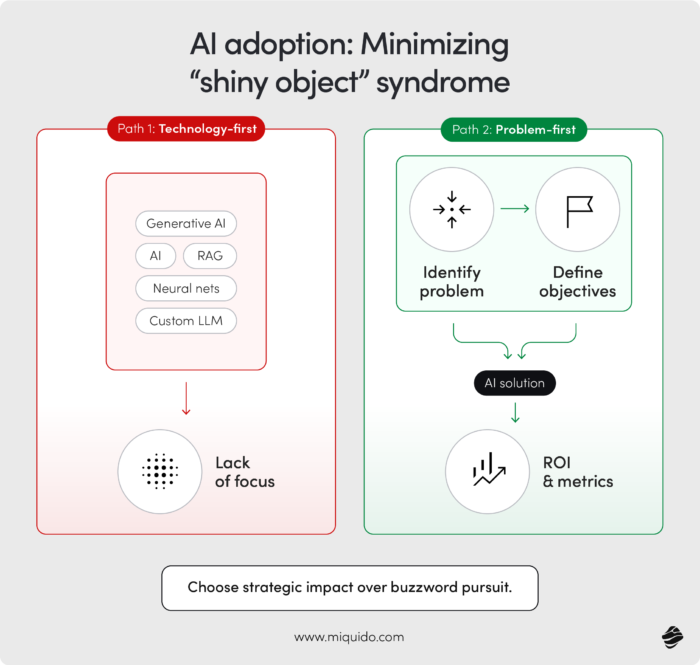

1. Shiny object syndrome: Starting with technology, not the problem

One of the most common anti-patterns in enterprise AI adoption is starting with a solution:

“We need AI in our product.”

From there, teams scramble to find a use case.

This leads to what I call feature-first thinking:

- Chatbots were added where search would work better

- Recommendation engines without meaningful personalization

- Automation applied to processes that aren’t bottlenecks

The result? Features that look impressive in demos but fail in real usage.

The correct approach is the opposite:

Start with a clearly defined problem — ideally one tied to time, cost, or operational inefficiency — and only then evaluate whether AI is the right tool.

2. Weak data foundations: The silent project killer

If there’s one factor that consistently determines success or failure, it’s data readiness.

Organizations often assume they are “data-rich.” In reality, they are:

- Data-fragmented

- Data-inconsistent

- Data-inaccessible

- Or worse — legally restricted from using it

This is precisely why Gartner estimates that 60% of AI projects fail due to inadequate data foundations.

AI systems don’t create value from nothing. They amplify what already exists.

Garbage in, garbage out is not just a cliché — it’s the defining law of AI systems.

And here’s the strategic insight many companies miss:

Your data — not your model — is your moat.

3. Overestimating AI capabilities

AI is powerful, but it’s also fundamentally different from traditional software.

It’s probabilistic, not deterministic.

That means:

- It can produce different outputs for the same input

- It can fail in unexpected ways

- It requires continuous validation

A common mistake I see is assuming that:

“If it works in testing, it will work in production.”

In reality, internal testing typically covers a narrow set of scenarios. Real users introduce variability at scale.

There’s also a mathematical effect most teams ignore: compounding failure probability.

Even highly accurate systems degrade when combined into workflows:

- At 99.9% accuracy per step, a process with hundreds of steps can drop below 50% reliability

- At 97% accuracy, even 20 steps can result in failure rates above 50%

This is why many AI-powered user experiences feel inconsistent or unreliable — even when individual components perform well.

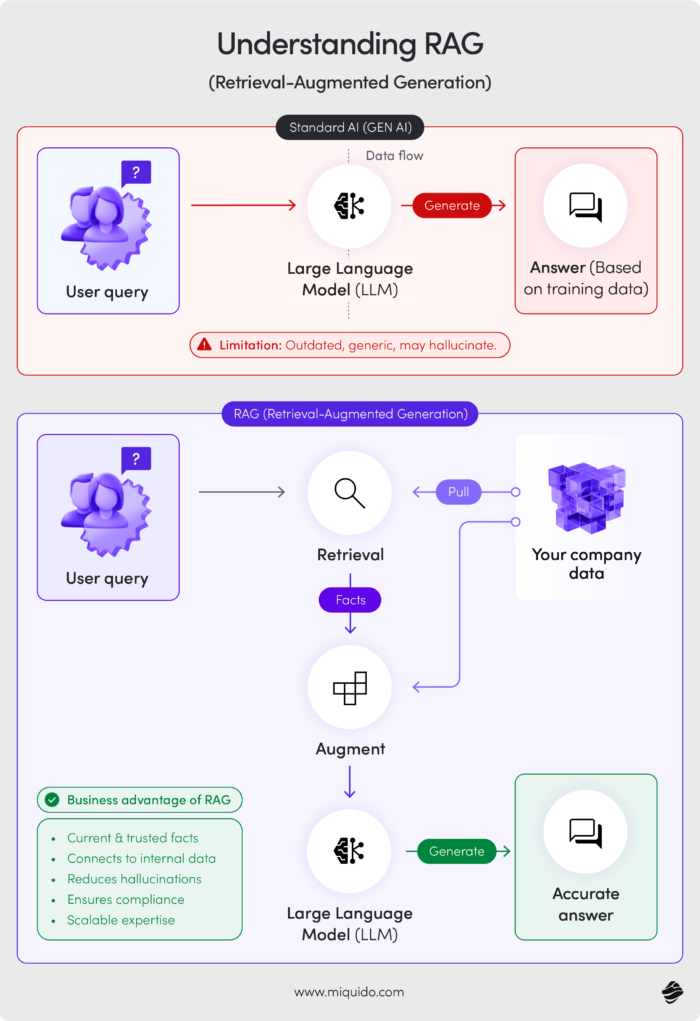

4. The “fine-tuning trap”

Another common misconception is that success in AI comes from fine-tuning models.

In reality, most enterprise value today comes from:

- Context injection (RAG)

- Domain-specific knowledge integration

- Workflow design

Retrieval-Augmented Generation (RAG) enables models to generate responses based on curated, up-to-date data rather than relying solely on training data.

But RAG is not a shortcut.

Poorly implemented RAG systems fail because:

- Context is irrelevant or outdated

- Data is poorly structured

- Retrieval quality is inconsistent

Without disciplined data engineering, even advanced architectures won’t deliver value.

5. Organizational skill gaps and resistance

AI transformation is not just a technical challenge — it’s an organizational one.

Many enterprises underestimate:

- The need for AI literacy across teams

- The effort required to redesign workflows

- The importance of user trust and adoption

Employees often:

- Don’t trust AI outputs

- Don’t understand how to use the tools

- Or fear being replaced

As a result, adoption stalls — and even well-built systems go unused.

6. Constantly changing goals

AI projects are particularly vulnerable to shifting priorities.

Why?

Because they sit at the intersection of:

- Technology

- Business strategy

- Data infrastructure

Stakeholders frequently change direction mid-project:

- Expanding scope

- Redefining success metrics

- Adding new use cases

What starts as a focused initiative turns into a vague “AI transformation” — and vague projects don’t succeed.

7. Misunderstanding cost dynamics

Traditional SaaS scales efficiently. AI does not.

Every AI interaction incurs cost:

- Token usage

- Compute resources

- Embeddings

- Monitoring and logging

These costs scale linearly with usage, sometimes faster.

Real-world example:

Swan, an AI-powered GTM platform, reported $50,000/month in AI costs despite having a small team and a growing user base.

This leads to a critical shift in thinking:

AI products behave less like software and more like services with variable cost structures.

If you don’t model this early, growth can actually reduce profitability.

Why do technology adoption strategies fail in enterprises

A common question in enterprise AI deployment is:

“Is 90% accuracy good enough?”

The answer depends entirely on context and risk.

- In a low-stakes application (e.g., food recognition), 90–95% accuracy may be perfectly acceptable

- In high-stakes environments (e.g., healthcare, finance, safety systems), even 99% may not be sufficient

AI systems must be evaluated against:

- Risk tolerance

- Consequences of failure

- Availability of human oversight

Without this context, accuracy metrics are meaningless.

How to avoid enterprise AI failure

The difference between failure and success isn’t luck. It’s discipline.

Here’s what consistently works.

1. Build on purpose and strategy

Every AI initiative should answer:

- What problem are we solving?

- Why does it matter?

- How will we measure success?

If you can’t articulate value in terms of time, money, or efficiency, stop.

Just as importantly, define success before you build anything. Too many teams retrofit metrics after deployment, which makes it easy to justify weak outcomes. Clear success criteria force better decisions early — they shape scope, influence design choices, and prevent projects from drifting into vague “innovation” territory where effort is high, but impact is impossible to prove.

2. Validate early — not after launch

Successful AI teams treat evaluation as a core capability.

They measure:

- Truthfulness (is it correct?)

- Groundedness (is it based on real data?)

- Relevance (is it useful?)

And they measure continuously — not just once.

Critically, evaluation should mirror real-world usage — not just controlled test scenarios. That means testing with messy inputs, edge cases, and actual user behavior. Many AI systems appear reliable in demos but break under real conditions because they were never exposed to true variability. Early, realistic validation surfaces these gaps when they’re still cheap to fix — not after users lose trust.

3. Start small, iterate quickly, scale what works

The most successful enterprise AI adoption examples follow a similar pattern:

- Identify a narrow, high-impact use case

- Build a focused solution

- Measure real outcomes

- Expand incrementally

Large-scale “AI transformation” programs rarely succeed without this foundation.

4. Invest in data infrastructure first

Before building AI features, ensure:

- Clean, structured data

- Accessible systems

- Clear ownership

- Legal compliance

This is not glamorous work — but it determines everything that comes after.

5. Design for trust and control

AI systems must be understandable and controllable.

This includes:

- Clear explanations (without overwhelming detail)

- Confidence indicators

- Fallback mechanisms

- Human-in-the-loop options

Trust is not automatic. It must be designed.

6. Align with business outcomes — not feature usage

High engagement does not equal value.

Instead, measure:

- Revenue impact

- Cost reduction

- Conversion improvement

- Time savings

If your AI doesn’t influence a business metric within months, it needs to be rethought.

7. Build a culture of learning

AI is not a one-time implementation.

It requires:

- Continuous improvement

- Monitoring and iteration

- Cross-functional collaboration

Organizations that treat AI as a long-term capability — not a project — are the ones that succeed.

Final thoughts on enterprise AI deployment challenges: Less hype, more value

AI in mobile apps isn’t a question of if anymore — it’s a question of how well.

Right now, we’re seeing a wave of companies rushing to integrate AI features to keep up with competitors and rising user expectations. But speed without direction leads to exactly what we’re already seeing across the market: products filled with “AI-powered” features that don’t improve anything.

Or worse — they degrade the experience.

The pattern is always the same. Teams start with the technology, not the problem. They ship features without validating real user value. They underestimate the importance of data, guardrails, and design. And they measure success by usage instead of outcomes.

That’s how you end up in pilot purgatory — or with features that users simply ignore.

The companies that will win in this new wave of AI in mobile will take a fundamentally different approach.

They will:

- Treat AI as a means to solve real problems, not a feature to showcase

- Focus on domain-specific value instead of replicating what users already get from ChatGPT

- Invest in data, evaluation, and control mechanisms before scaling

- Design AI experiences that respect user expectations and build trust

- And most importantly — measure impact, not hype

Because in the end, users don’t care if something is powered by AI.

They care if it works.

If it saves them time.

If it helps them make better decisions.

If it makes their lives even slightly easier.

That’s the bar.

And in a landscape where most enterprise AI projects fail, meeting that bar consistently is what makes you the exception.

![[header] most enterprise ai projects fail – be the exception](https://www.miquido.com/wp-content/uploads/2026/04/header-most-enterprise-ai-projects-fail-–-be-the-exception-1920x1280.jpg)

![[header] ai app development costs guide in 2026](https://www.miquido.com/wp-content/uploads/2024/04/header-ai-app-development-costs-guide-in-2026-432x288.jpg)

![[header] ai for production planning streamlining efficiency in manufacturing](https://www.miquido.com/wp-content/uploads/2025/01/header-ai-for-production-planning_-streamlining-efficiency-in-manufacturing-1-432x288.jpg)