AI is now central to product strategy across industries. Boards push it, competitors ship it, and customers expect smarter, faster, more personalized experiences. That pressure often leads companies to adopt AI for the wrong reasons.

The biggest mistake? Starting with technology instead of the problem. Teams jump into artificial intelligence development or generative AI solutions without clear value, ending up with features that look impressive but fail in practice—unused chatbots, weak recommendations, or automation that adds friction instead of removing it. The result: wasted budget and lost trust.

When discussing AI app development cost, there are really two questions:

- How much does it cost to build?

- Is it worth building at all?

Costs go far beyond initial development cost estimation. AI becomes expensive in production—token usage, monitoring, guardrails, compliance, and ongoing maintenance of AI apps. Without real user or business value, even the best machine learning models or pre-trained models won’t justify the investment.

This guide helps decision-makers—CTOs, founders, and product leaders—budget smarter. It covers real cost ranges, key factors like AI model training, hidden expenses, compliance risks, and how to validate ideas before committing to full-scale development.

Key takeaways on AI development cost:

- In 2026, ai app development cost can range from roughly $10,000–$30,000+ for a PoC, $30,000–$100,000+ for an AI MVP, and $100,000–$500,000+ for enterprise-grade or highly regulated AI systems.

- The biggest ai app development cost factors are use case complexity, data readiness, integrations, compliance, model choice, and how much human oversight the solution needs.

- Reasoning-oriented AI systems often cost more than simpler AI features because token usage grows with longer prompts, multi-step workflows, retries, tool use, and validation loops.

- Launch is only part of the budget. AI products also require monitoring, evaluation, observability, prompt and workflow updates, governance, and in some cases retraining or fine-tuning.

- A proof-of-concept or narrow MVP is often the smartest starting point because it lets you test business value before scaling infrastructure and operational costs.

- The EU AI Act can materially increase delivery timelines and total cost of ownership, especially in healthcare, finance, HR, and other high-risk environments.

- The biggest strategic mistake is not spending too much on models. It is building AI before validating the problem, the data, and the value.

How much does it cost to develop an AI app in 2026?

Let’s start with the question most buyers are really asking: how much does it cost to develop an AI app?

The honest answer is that there is no single number. A lightweight AI proof of concept built on existing APIs is a very different project from a production-grade assistant integrated with internal systems, role-based access control, compliance requirements, human review, and observability.

That said, most companies still need directional ranges to start budgeting. Here is a practical way to think about AI app development pricing in 2026.

Typical AI software costs range

Before diving into specific numbers, it’s important to understand that AI costs don’t come from a single source. They are shaped across the entire artificial intelligence development process—from early-stage cost estimation and AI consulting services, through building with pre-trained models or more advanced machine learning models, to scaling generative AI solutions. Whether you’re relying on lightweight APIs or investing in deeper AI model training, the final cost reflects the choices you make at every stage. That’s why estimates vary widely—but there are still useful ranges that can help you benchmark what to expect.

| Project type | Directional cost range |

| AI discovery workshop | $3,000–$15,000 |

| Proof of concept/prototype | $10,000–$30,000+ |

| Narrow AI MVP | $30,000–$100,000+ |

| Production of an AI feature inside an existing app | $50,000–$150,000+ |

| Custom AI product/enterprise implementation | $100,000–$500,000+ |

These are not universal benchmarks. They are directional ranges intended to support early planning. The actual cost to build an AI app depends heavily on the problem you are solving, the quality of your data, the level of compliance involved, and whether the system needs to work reliably at scale.

A simple AI feature can be affordable. A serious AI system, especially in a regulated domain, gets expensive quickly.

Monthly operating costs after launch

This is where many teams underestimate reality.

AI operating costs can range from hundreds of dollars per month to tens of thousands, depending on:

- traffic volume

- model selection

- token consumption

- prompt length

- reasoning depth

- RAG architecture

- moderation

- logging and observability

- support and human review

A prototype can look cheap because usage is small and failure cases are limited. Production is where the real economics appear.

Why AI app development costs vary so much

One reason executives get mixed signals about AI software development costs is that the label “AI app” covers radically different things.

An AI note summarizer inside an existing SaaS product is one type of investment. A clinical assistant recommending medical tests, an HR screening workflow requiring transparency and oversight, or a financial decision-support tool subject to governance requirements are entirely different categories.

AI costs vary because the technology is only one part of the equation. The real budget is shaped by everything around the model: your data, your design decisions, your integration architecture, your legal obligations, your evaluation methods, and how much failure your business can tolerate.

And that last point matters more than people admit. If your app helps users discover a product, a certain level of imperfection may be acceptable. If it influences hiring, treatment, financial decisions, or safety outcomes, the acceptable margin for error becomes much smaller. That means more testing, more controls, more human oversight, and more cost.

What drives AI app development cost?

If you want to understand the cost factors in AI app development, stop thinking only about models. Models matter, but they are not the whole budget story.

1. Use case complexity

The more ambiguous the task, the more expensive the solution tends to become.

A basic content assistant or internal Q&A feature may be relatively fast to launch. But once you move into multi-step decision support, workflow automation, planning, reasoning, recommendations, or actions across multiple systems, costs rise fast.

That happens because complexity multiplies across the stack:

- more edge cases

- more orchestration logic

- more QA scenarios

- more need for fallback handling

- more monitoring once the feature is live

A good rule of thumb: if the AI feature needs to think, validate, retrieve, explain, and then trigger action, it will cost far more than a single-prompt experience.

2. Off-the-shelf APIs vs custom build

For many companies, the most cost-effective path is still to start with external APIs rather than building custom model infrastructure.

Using APIs can reduce time-to-market and keep early-stage risk lower. But there are trade-offs. You may face:

- less control over behavior

- recurring token costs

- vendor dependency

- limitations around domain specificity

- data governance questions

Custom models, fine-tuning, or private infrastructure can make sense when the use case is sensitive, differentiated, or high-volume enough to justify the investment. But moving from API-first experimentation to custom AI can significantly increase the budget.

3. Model choice

This is one of the most direct cost drivers.

Different models have different pricing, latency, accuracy, context limits, and operational trade-offs. A more advanced model may improve performance, but that does not automatically mean better business value.

I often see teams choose the most powerful model available before proving that a smaller model could do the job well enough. That is backwards. If a simpler model gets you 90% of the value at a fraction of the cost, that may be the better product decision.

4. Reasoning AI and agentic workflows

This deserves its own section because it is one of the fastest-growing sources of budget overruns.

Why Reasoning AI increases costs

Reasoning-oriented AI workflows often look elegant in architecture diagrams but can be surprisingly expensive in production.

That is because these systems do not make a single model call. They often involve:

- planning

- retrieval

- tool use

- verification

- re-prompting

- output validation

- retry handling

Each extra step can increase token usage, introduce additional latency, and create more opportunities for failure. And as workflows become more agentic, costs compound.

In business terms, this means the model pricing page rarely tells you the real production cost. The apparent per-request price may look manageable, but once you add long prompts, chained calls, tool interactions, retries, and evaluation layers, the actual cost can be much higher than expected.

For some use cases, reasoning AI is the right answer. For others, it is overkill. A smaller model, a narrower workflow, or even well-designed traditional software logic can be far more cost-efficient.

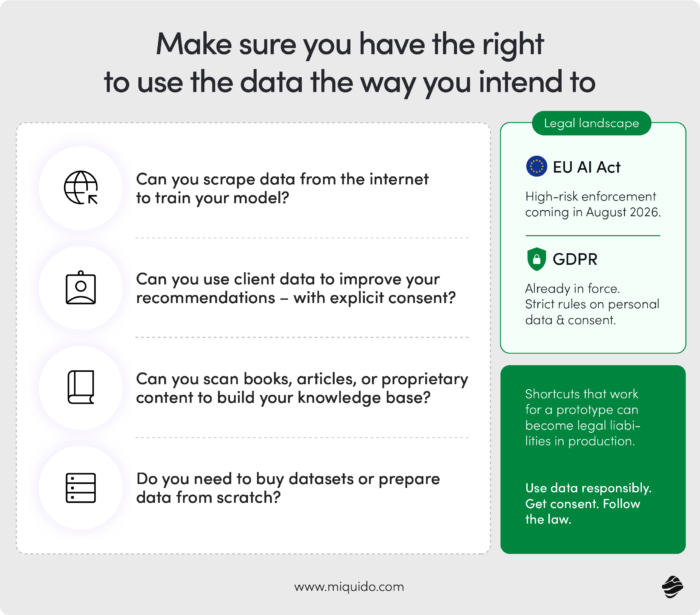

5. Data availability and quality

If your data is weak, your AI project becomes more expensive before it becomes useful.

This is one of the most consistent patterns I have seen. Companies want to move fast on AI, but the underlying data is incomplete, inconsistent, difficult to access, poorly labeled, or legally unclear. That creates delays and extra costs long before the product is ready.

In practice, the data work may include:

- identifying data sources

- cleaning and structuring data

- labeling or annotating examples

- handling missing or conflicting records

- mapping permissions and ownership

- preparing knowledge bases for RAG

- defining what data is legally usable

In many AI projects, this is the least glamorous work and one of the most important.

6. Integrations with internal systems

A standalone demo is relatively cheap. A production AI feature connected to your real business systems is not.

As soon as you integrate with CRMs, ERPs, internal databases, analytics systems, document repositories, payment systems, patient records, HR platforms, or customer support tools, the complexity rises. Integrations affect security, latency, permissions, data freshness, and testing scope.

This is often where an AI feature begins to become a real product rather than a prototype. It is also where budgets expand.

7. UI/UX and trust-building design

One of the most expensive myths in AI product development is that AI features are mainly a backend problem.

They are not.

If the AI is visible to users, design becomes a major cost factor because people need to understand what the system is doing, when to trust it, and what happens when it is wrong.

That usually means investing in things like:

- clear user flows

- confidence or uncertainty cues

- citations or source references where relevant

- edit and correction options

- graceful fallback states

- human escalation for high-stakes cases

- messaging that sets expectations properly

This is not decoration. It is the layer that protects trust.

8. Infrastructure and cloud costs

Beyond model calls, there is the wider infrastructure bill.

Depending on the architecture, you may need:

- application hosting

- background processing

- databases

- vector stores

- search infrastructure

- logging systems

- evaluation pipelines

- alerting and monitoring tools

- data processing workflows

At a low scale, these costs may be modest. At higher scale, they become a serious operating expense.

9. Compliance and legal requirements

The more regulated the use case, the higher the cost.

That applies not only to building the AI feature but also to documenting, reviewing, validating, and maintaining it responsibly. This is especially relevant in healthcare, finance, HR, insurance, and safety-related environments.

10. Testing, guardrails, evaluation, and observability

Many teams still budget for AI as if QA were a standard software testing problem. It is not.

Because AI systems are probabilistic, you need broader forms of validation. It is not enough that the feature worked in a handful of demo scenarios. You need to know how it behaves across messy, real-world inputs.

That means budgeting for:

- prompt and workflow testing

- safety checks

- evaluator functions

- hallucination or grounding checks

- red-teaming

- bias or failure analysis

- output logging

- production observability

This work is easy to underbudget and painful to skip.

11. Maintenance and ongoing monitoring

Standard software maintenance is one thing. AI app maintenance cost is another.

After launch, you still need to watch quality, provider changes, usage patterns, token burn, retrieval performance, user behavior, and failure rates. AI systems change over time because the world around them changes.

That turns maintenance into a core part of the business model, not a footnote.

AI vs traditional software logic — when is AI really worth the cost?

This is one of the most important budgeting questions a company can ask, and too few teams ask it early enough.

My test is simple:

- if the task requires judgment on unstructured data, AI may add real value

- if the task follows a clear decision tree with structured inputs, traditional software logic is usually cheaper, faster, and more reliable

That distinction matters because many teams reach for AI when a deterministic system would work better.

Sorting, filtering, routing, validation against fixed rules, threshold logic, workflow automations, or calculations based on structured inputs often do not need AI at all. In these cases, traditional software is not only cheaper to build but also easier to test, explain, and maintain.

AI starts to justify itself when you truly need it to interpret ambiguity: natural language, images, fuzzy preferences, intent detection, semantic search, summarization, recommendations from messy input, or decision support grounded in complex context.

There is another useful test I recommend:

If the feature can be replaced by ChatGPT without any meaningful loss of value, it probably does not justify custom AI investment.

That sounds blunt, but it is a useful strategic filter. Your moat is not “we use an LLM.” Your moat is domain knowledge, proprietary workflows, trusted data, user context, or specific business logic that generic AI tools do not have.

The right business question is not “can we add AI?” It is:

Will this feature save the user time, money, or energy?

If it does not materially improve one of those three, it may not be worth the cost.

AI app development cost breakdown by stage

A useful way to estimate the cost to build an AI app is to break the work into stages instead of treating it as one technical block.rather than treating it as a single

Discovery and strategy

This is where you decide whether AI should exist at all, what problem it solves, how value will be measured, and what constraints matter.

At this stage, the work often includes:

- use case selection

- stakeholder alignment

- feasibility analysis

- data readiness assessment

- ROI hypothesis

- solution direction

- risk mapping

Typical cost: a few thousand to low tens of thousands of dollars

Teams that rush past discovery often pay for that mistake later.

Data collection and preparation

This is often where the “boring work” lives—and where many budgets expand.

Depending on the product, this stage may involve preparing structured data, documents, knowledge bases, taxonomies, labels, user permissions, metadata, or retrieval pipelines.

If you are using RAG, this step includes much more than uploading files into a vector store. Context needs to be prepared carefully. Chunking, metadata, relevance, freshness, and access rules matter.

Design and prototyping

Designing AI well is not about making it look futuristic. It is about making it understandable and useful.

You need to design for:

- confidence

- uncertainty

- user control

- explanation

- error recovery

- trust calibration

This is especially important in mobile products, where intrusive AI feels worse than invisible AI.

Development

This includes the normal engineering work: front-end, back-end, APIs, state handling, authentication, analytics, and release readiness.

The difference is that AI development often includes orchestration logic, prompt design, tool invocation logic, and more error handling than typical product features.

Model integration and orchestration

This is where your application begins to work with models, retrieval systems, tool use, and workflow logic.

Costs vary depending on whether you are building:

- a single-turn assistant

- a RAG-based feature

- a structured extraction workflow

- a decision engine

- an agentic multi-step system

This is also where costs can escalate quietly because each extra capability tends to add more evaluation and failure handling requirements.

QA, evaluation, and guardrails

This stage is more important than many teams expect. AI needs testing not just for functionality, but for reliability, safety, and quality under messy conditions.

That usually means validating:

- truthfulness

- groundedness

- relevance

- coverage

- edge-case behavior

- moderation compliance

- resilience against misuse

Deployment

Production deployment is where infrastructure, security, analytics, monitoring, and incident readiness become real.

A working prototype is not the same as a production service that the business can rely on.

Maintenance and improvement

This is where AI app maintenance cost becomes part of the long-term budget.

You may need to update prompts, change models, improve retrieval quality, refine workflows, add evaluators, reduce token usage, or introduce human review paths as the product matures.

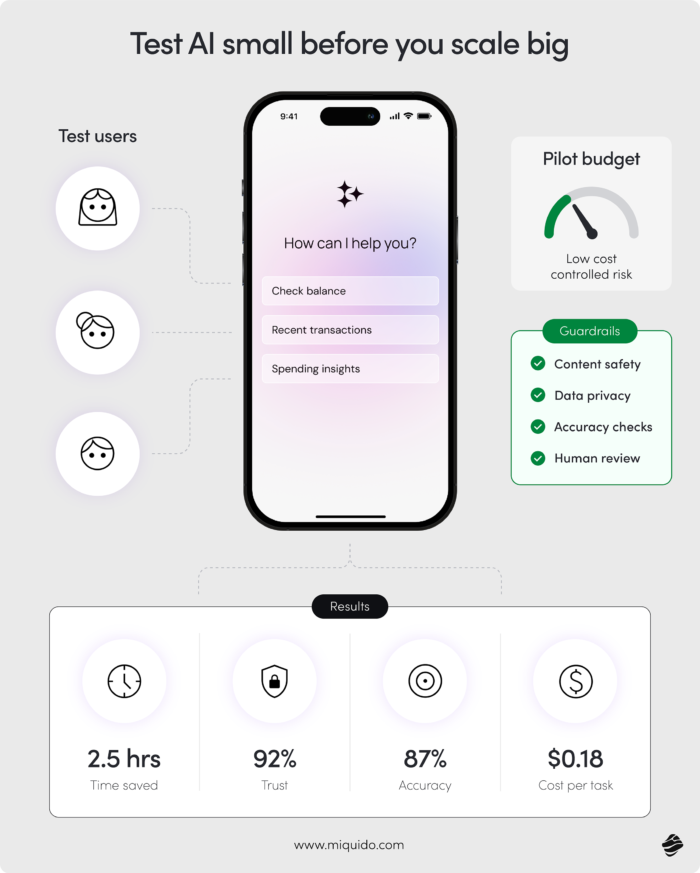

What is the most cost-effective way to test AI before fully investing?

Most companies approach this backwards. They treat AI like a standard app development project—define the scope, build the feature, and launch.

That’s a mistake.

AI is fundamentally different. You’re not just building software—you’re testing uncertainty: model behavior, data quality, user trust, and real-world usefulness.

That’s why my answer is simple:

Do not build the full AI product first.

The cheapest way to fail is to scale too early.

Start with an AI discovery workshop

Before you write code, clarify:

- what problem are you solving

- whether AI is actually needed

- what data exists

- what business metric should move if the feature works

- what success looks like in the first 90 days

This is usually one of the cheapest and highest-leverage steps in the entire process.

Build a proof of concept

A PoC is useful when the main uncertainty is technical feasibility.

Typical AI proof of concept cost: $10,000–$30,000+

A good PoC helps answer questions like:

- Can the model perform the task well enough?

- Do we have the data we need?

- What accuracy or usefulness can we realistically achieve?

- What are the obvious failure modes?

What a PoC should not do is pretend to be a product.

Launch a narrow MVP

A strong AI MVP can cost $30,000–$100,000+, depending on scope.

The key is to keep it narrow. Do not try to automate the whole business process. Pick one workflow, one user segment, one constrained use case. Prove value there first.

That could mean:

- an assistant for one support flow

- AI summarization for one document type

- recommendations for one eCommerce journey

- one HR screening step instead of the entire hiring process

Use APIs before building custom infrastructure

If the use case is still being validated, API-first experimentation is often the smartest move. It keeps the upfront investment lower and helps you learn faster.

There is no strategic pride in building custom AI infrastructure before you know users care.

Measure value before scaling

Do not scale because the demo looked impressive. Scale because the feature proved that it:

- saves time

- reduces cost

- improves conversion

- increases retention

- reduces support load

- improves the quality of decisions or outputs

In other words: validate ROI before scaling complexity.

Hidden costs companies often miss

This is the part many budgets fail to capture.

A pricing page may show token costs. Your real AI app development pricing is almost always higher.

Retries and failure handling

In production, not every call works cleanly. Outputs may need to be retried, reformatted, revalidated, or routed to fallback logic. That adds both engineering cost and operating cost.

Moderation and safety layers

If users can input open-ended text, upload content, or trigger external actions, you may need moderation systems and safety checks. These are not optional in many products.

RAG and embeddings

Retrieval systems add value, but they also add cost. Embeddings, storage, indexing, query handling, freshness management, and permission filtering all need to be maintained.

Observability and logging

If you cannot see what the system is doing, you cannot improve or govern it. Observability is essential, especially once AI touches real user workflows.

Human review and fallback workflows

In high-stakes environments, AI often needs human oversight. That means operational design, escalation paths, training, and staffing considerations—not just engineering effort.

Prompt and version management

Prompts, retrieval settings, model versions, and workflow logic evolve over time. Without versioning discipline, quality becomes unstable, and debugging becomes painful.

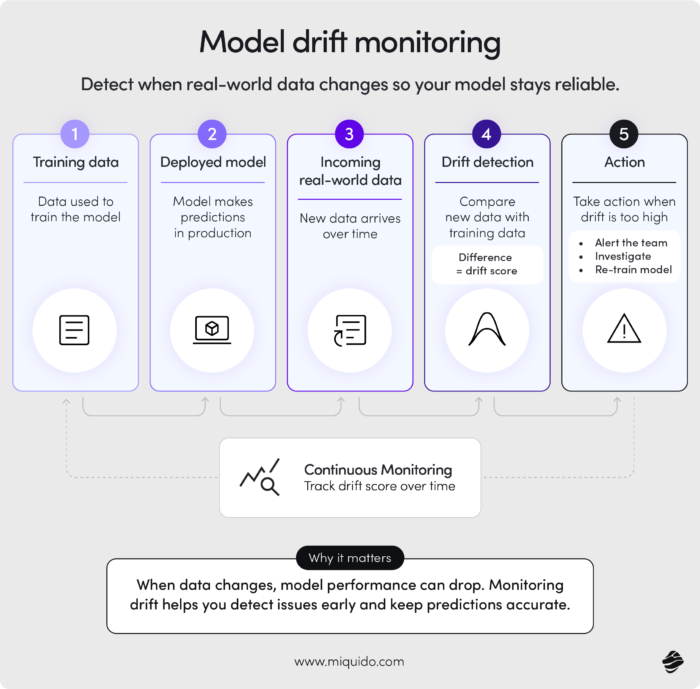

Model drift monitoring

Quality can decline as context, user behavior, and data change. That means you may need recurring monitoring, evaluation, and sometimes retraining or fine-tuning.

Compliance work

Documentation, audits, governance, internal approvals, legal review, and policy alignment all add time and cost.

Higher token bills from reasoning AI

This one is underestimated constantly. Teams model one elegant use case, then discover that real production behavior includes longer prompts, retries, tool use, branching flows, and much higher token consumption.

Change management and adoption

Sometimes the AI works, but the organization is not ready. People need training, new processes, clearer responsibilities, or better trust in the outputs. Adoption is not free.

The EU AI Act and how it changes AI budgeting in Europe

If you are building AI products in Europe, the EU AI Act is no longer something you can treat as abstract background noise. It can materially affect both delivery timelines and budgets.

This matters especially for products in areas like:

- healthcare

- finance

- HR and recruitment

- insurance

- education

- safety-related systems

The impact is highest where the system could meaningfully affect people’s rights, access, safety, or important decisions.

How the EU AI Act affects cost

The cost is not just in legal review. It can influence the entire operating model around the AI feature.

Practical cost implications may include:

- documentation requirements

- governance processes

- risk management procedures

- transparency measures

- human oversight design

- testing and validation

- logging and traceability

- internal controls

- legal and compliance review

For some teams, this will increase build cost. For others, it will mainly expand the total cost of ownership after launch.

That is the key budgeting point: the EU AI Act should be treated as part of the total cost of ownership, not just launch cost.

You do not need to turn every AI initiative into a legal project. But if you ignore compliance until late in the delivery process, you risk both delays and expensive rework.

How much does AI app maintenance cost?

This is one of the most overlooked questions in AI budgeting.

A lot of teams ask, “How much will it cost to build?” far too few ask, “How much will it cost to keep this useful, safe, and cost-effective after launch?”

For many products, a practical benchmark is:

- around 15–25% of the initial development cost annually

- or monthly ranges from hundreds to tens of thousands of dollars, depending on scale and complexity

Why AI maintenance is often higher than standard software maintenance

With normal software, maintenance usually means bug fixes, security updates, small improvements, and compatibility work.

With AI, maintenance may also include:

- monitoring output quality

- prompt and workflow updates

- model or provider changes

- cost optimization

- retrieval tuning

- moderation adjustments

- recurring evaluation

- incident analysis

- retraining or fine-tuning where relevant

- governance and documentation updates

This is why AI app maintenance costs often exceed standard maintenance expectations.

Model drift monitoring

This deserves a specific mention.

Model quality can decline over time because the world changes. User behavior changes. Data changes. Business context changes. Even if the model itself stays the same, the environment around it does not.

That is why maintenance budgets should include:

- drift detection

- observability

- recurring evaluations

- human review of samples

- retraining or reconfiguration if performance drops

If your product depends on AI outputs being trusted, this is not a luxury. It is part of the operating model.

How to estimate ROI from AI investments

This is where many AI initiatives become strategically weak.

Companies often measure AI usage, internal excitement, or perceived innovation. Those are not useless signals, but they are not enough.

The real question is whether the AI feature changes outcomes.

What should ROI be tied to?

A serious AI project ROI conversation usually includes metrics such as:

- time saved

- cost reduction

- support deflection

- conversion uplift

- retention improvement

- revenue increase

- employee productivity

- operational efficiency

The exact metric depends on the use case. An AI support assistant should not be judged the same way as a document analysis tool or a personalization engine.

Measure value, not just feature usage

This distinction matters. A chatbot may show high engagement because users are struggling to complete tasks through normal navigation. That is not success. That may be friction disguised as adoption.

I would look at three layers of measurement.

First, user value. Is the feature saving time, money, or energy compared with the previous method?

Second, business value. Is it moving a metric that matters to the P&L or to operational performance?

Third, AI-specific quality. Is the output truthful, grounded, relevant, and useful enough to sustain trust?

If you cannot measure on all three layers, the feature may still be a demo rather than a real product capability.

![[header] ai app development costs guide in 2026](https://www.miquido.com/wp-content/uploads/2024/04/header-ai-app-development-costs-guide-in-2026-1920x1280.jpg)